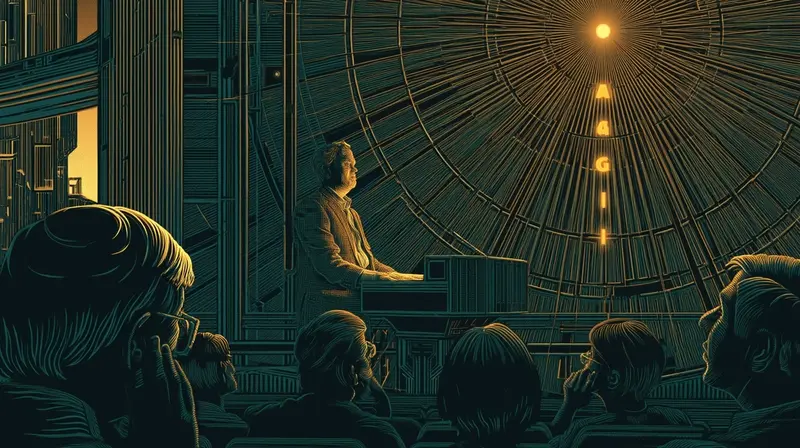

On March 23, Jensen Huang told Lex Fridman that Nvidia has "achieved AGI." The same day, OpenAI offered private equity firms a guaranteed minimum return of 17.5% to secure joint ventures, beating Anthropic's terms. And separately, Axios reported that OpenAI is in talks to buy 5 gigawatts of electricity by 2030 from Helion Energy — the fusion startup backed by Sam Altman, who stepped down as Helion's board chair ahead of the deal. Three data points from one day. A declaration that the goal has been reached. A guarantee for investors who apparently need one. And a purchase of electricity from a technology that doesn't yet produce commercial power, to run the intelligence that has already been achieved.

The Word

AGI — artificial general intelligence — has meant different things to different people for nearly three decades. Mark Gubrud coined the term in a 1997 research paper. For most of that history, it referred to a system capable of performing any intellectual task a human can — a benchmark so distant that debating its arrival felt academic.

Then it started arriving, or seeming to, and the word became contested.

- Jul 2024 OpenAI publishes five levels to track AGI progress: Chatbots, Reasoners, Agents, Innovators, Organizations. The company places its models at level two.

- Jan 2025 Sam Altman writes: "we are now confident we know how to build AGI as we have traditionally understood it."

- Apr 2025 Researchers describe models like o3 and Gemini 2.5 Pro as "Jagged AGI" — unreliable, brilliant in spots, failing unpredictably.

- Jun 2025 The Financial Times reports the tech industry has no consensus on what AGI or ASI actually means.

-

MAR 2026Jensen Huang, CEO of the company that sells AI chips to all of them, says: "we've achieved AGI."

The progression is legible. "We're at level two." Then "we know how to build it." Then "it's jagged — brilliant but unreliable." Then "nobody agrees what it means." Then the CEO who sells the hardware declares it achieved. Each step moved the word closer to something the current products could fit inside.

The Clause

If AGI were merely a philosophical question, the definition wouldn't matter. But it isn't.

In late 2023, The Information revealed that Microsoft and OpenAI had a contractual agreement that defined AGI and tied it to specific business terms — including conditions under which Microsoft's access to OpenAI's models could change. By June 2025, Microsoft was pushing to remove the AGI clause entirely. A month later, Wired reported the critical detail: under the contract, OpenAI decides when it has reached AGI. The company that defines the word controls the trigger.

Microsoft didn't push to remove the clause because AGI was near. It pushed because the definition was worth billions. If OpenAI declares AGI, the contract changes. If AGI remains unachieved, the partnership continues on current terms. The word isn't a milestone. It's a switch — and the parties who flip it have financial interests in when it flips.

Jensen Huang is not a party to that contract. But when the CEO of the company that supplies every AI lab declares AGI achieved, the claim doesn't exist in a vacuum. It validates spending. It justifies valuations. And it raises a question for every contract, every investment thesis, and every regulatory framework that hinges on the word: achieved by whose definition?

The Bill

If AGI has been achieved, the capital requirements should be declining. The goal is reached. The research is done. What remains is deployment — running what you've built.

That is not what March 23 looks like.

OpenAI is guaranteeing 17.5% returns to private equity firms — and offering early access to new models as a sweetener. The guarantee means OpenAI absorbs downside risk to attract capital. You do not offer guaranteed returns when the product sells itself. You offer them when you need more money than the product alone can justify.

And the electricity: 5 gigawatts by 2030 from Helion Energy, a fusion startup that has not yet produced commercial power. Five gigawatts is roughly the output of five nuclear reactors. The electricity is for running AI at scale — the inference era's utility bill. And the seller is a company its CEO invested in and chaired.

Altman stepped down as Helion's board chair ahead of the deal, a governance step that acknowledges the conflict without eliminating it. OpenAI's CEO built the energy company. OpenAI is buying its output. The deal may be at market rates. But the structure — founder buys from founder's other company — is the kind of related-party transaction that public companies disclose in bold type.

The pattern of March 23: AGI declared. Returns guaranteed. Fusion power purchased. The declaration says the work is done. The capital says it's accelerating.

The Reclassification

AGI meant something once: a system that matches human intelligence across any domain. A finish line. The thing you build toward and — if it arrives — changes everything.

Jensen's declaration doesn't use that definition. Neither did Altman's January 2025 blog post saying OpenAI "knows how to build AGI." Neither do the investors writing checks at $730 billion valuations. The word has been reclassified. It no longer means "the goal has been reached." It means "the products are good enough to sell at this price."

That reclassification is useful. It validates the $650 billion in hyperscaler capex. It justifies the guaranteed returns to PE firms. It keeps the capital flowing into chips, electricity, data centers, and ad sales teams. The word AGI — stripped of its original meaning — is a financing instrument.

The evidence for what AGI actually means in March 2026 isn't in Jensen's interview. It's in the Reuters story and the Axios story that landed the same day. Five gigawatts from a fusion reactor that hasn't been built. Guaranteed returns for investors who need protecting. The word says achieved. The capital says otherwise.