Only 12% of pages cited by AI models also rank in Google's top 10. Ahrefs found this across 1.9 million AI citations. eMarketer confirmed it independently: fewer than 10% of sources cited by ChatGPT, Gemini, and Copilot appear in Google's top results for the same query. You can hold the number-one position on Google and be completely invisible to the AI answering the same question. Two optimization strategies that should be the same game are producing almost entirely non-overlapping results.

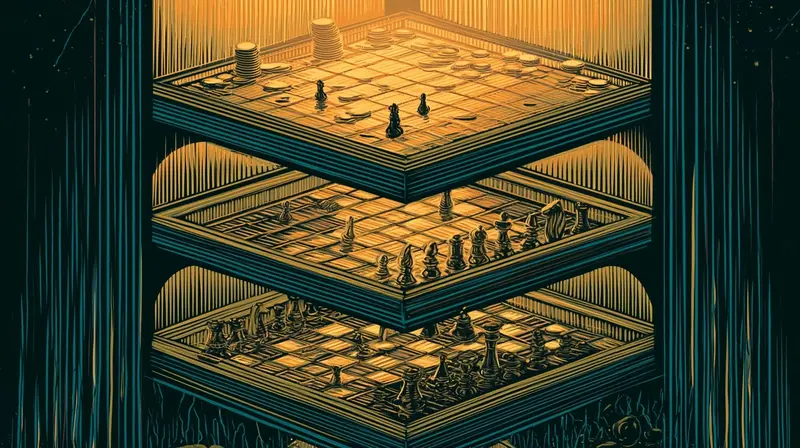

Search Atlas confirmed the divergence across 18,377 matched queries — GPT, Gemini, and Perplexity all decouple from Google independently. Two different selection functions, one optimizing for link-based relevance, the other for entity structure and answer density, running against the same corpus and returning different results. The industry calls the new discipline "generative engine optimization" — GEO. But that label papers over a deeper problem. There aren't two games. There are three.

Three Games, One Search Box

Game One: Traditional SEO. Keywords, backlinks, domain authority, crawlability, page speed, SERP position. The 1998 engine that still runs. 95% of SEO professionals still rank backlinks as "most critical" or "very important." @theseoguy_ published the playbook — the 80/20 of local SEO that still drives traffic — and it works. @prathamgrv pointed out that PageRank math from 1998 still powers search in 2026. The foundations are intact. The economics on top of them are not.

Organic clicks fell 42% across 64 sites since AI Overviews expanded. Position #1 CTR dropped from 7.3% to 1.6% in two years. The traditional playbook still produces rankings. It just produces fewer clicks per ranking than it used to — and the trend is accelerating.

Game Two: AI Citation Optimization. This game optimizes for getting your brand into LLM training data consensus and RAG retrieval pools. The signals are different: entity structure over keyword density, multi-source consensus over backlinks, answer-first formatting over engaging narrative leads. @zenorocha described the result:

Users signing up because AI models recommend the product — with no traditional SEO involved. ChatGPT processes 60% of AI search traffic — already competing for the same choice screen. Sessions run 13 minutes versus Google's 6. Perplexity handles 780 million queries per month using real-time RAG against the live web. The optimization target is citation-worthiness: publish something worth citing, format it for easy extraction, earn third-party validation across Reddit, G2, YouTube, and industry publications. The content structure that earns these citations — answer-first, entity-mapped, schema-rich — directly conflicts with what ranks on Google.

Game Three: GEO (Google AI Overviews). This game optimizes specifically to be cited inside Google's own AI-generated summaries. It partially overlaps with traditional SEO — you need Google authority to be featured in AI Overviews — but the optimization target is completely different. Be the cited answer, not the number-one blue link. Traffic from being cited in an AI Overview doesn't follow the same CTR model. You're visible without being clicked.

@coreyhainesco built a Claude Code skill that audits specifically for AI citation readiness — optimal passages of 134 to 167 words, structured definitions, comparison tables AI can parse, entity markup. @heygurisingh open-sourced GEO-SEO Claude, a tool that audits for all three surfaces simultaneously. These tools exist because the games require different inputs.

The Incompatibility Matrix

The three games conflict on almost every dimension that matters for content strategy:

| Dimension | Traditional SEO | AI Citation | GEO (AI Overviews) |

|---|---|---|---|

| Primary goal | SERP rank → clicks | AI model cites your brand | Google's summary features you |

| Content tone | Engaging, narrative, keyword-rich | Factual, dense, answer-first | Conversational, answer-shaped |

| Success metric | Rank, CTR, organic traffic | Citation frequency, share of voice | AI Overview impressions |

| Long-form content | Rewarded (depth, dwell time) | Penalized (answer is buried) | Punished (AI skips to answer) |

| Keyword targeting | Map keywords to pages | Map entities to claims | Match conversational queries |

| Schema markup | Helpful | Critical | Critical |

The conflict is starkest on content structure. The optimal Google-ranking post — a 3,000-word evergreen pillar with internal links and keyword distribution — is not the same object as the optimal LLM-cited explanation — a 400-word answer-first entity-mapped Q&A that earns Perplexity citations. Publishing one hurts the other's optimization. A business that has to play all three simultaneously is making trade-offs that no current framework addresses.

The Eighty Percent Trap

Here's the counter-evidence, and it's the reason the industry hasn't reckoned with this yet.

Jeremy Moser, co-founder of uSERP, told Digiday: "80% of GEO is good, fundamental SEO. If a GEO service does not openly tell you that success in AI visibility is 80% good fundamental SEO, they are selling you snake oil." He's right. The same Digiday piece concedes that "the more technical end of GEO — particularly anything touching retrieval architecture for LLM queries — is legitimately new territory."

This is the trap. The 80% overlap makes practitioners who built their careers on traditional SEO genuinely correct when they say "GEO is just SEO." A practitioner optimizing specifically for LLM RAG retrieval is playing a genuinely different game. Both are right. Neither is talking to the other. And @kalashvasaniya's hierarchy — "backlinks > GEO > SEO" — is itself a Game Two claim masquerading as a universal truth.

Meanwhile, @codyschneiderxx described Claude Code as a power tool for traditional SEO — keyword universe mapping, SERP analysis, content publishing — using the same agent infrastructure that others use for AI citation optimization. The tools don't know which game you're playing. The practitioner has to.

The Therefore

The traditional SEO expert, the AI citation optimizer, and the GEO specialist are each giving advice that works — within their game. The advice conflicts across games. And no unified playbook exists that solves the allocation problem: given finite content resources, how do you distribute effort across three surfaces with different success metrics, different content structures, and different monetization assumptions?

The zero-click search doesn't eliminate the value of being found. It eliminates the value of being found the old way. The new surfaces reward a different kind of visibility — one the traditional playbook wasn't built to measure.

Backlinks may be the one input that feeds all three games — they're ranking signals for Google, credibility markers for AI models, and authority signals for AI Overviews. But acquiring them for LLM citation authority requires different source targeting than for PageRank. Reddit, G2, and YouTube mentions matter more for AI citation than a guest post on a DA-70 blog.

The first unified playbook — the one that maps the allocation problem across all three surfaces with a single content strategy — will be worth more than the tools it orchestrates. The tools already exist. @johnrushx has been iterating on an AI SEO agent since 2022. The audit tools are proliferating. What's missing is the framework that tells a one-person founder: this piece of content serves Game Two, this one serves Game One, and this is the 20% of Game Three that isn't just good SEO. Until that framework exists, every practitioner is optimizing one game and hoping the others don't notice.

More on Google, Perplexity, AI search, and SEO. Explore entity coverage via the Pulse API.