Skill files were designed so that expertise could outlast the expert — so the company's knowledge wouldn't walk out the door when the person who held it did. In April 2026, workers in China have been using skill files to document their colleagues, train AI agents on the results, and demonstrate to management that those colleagues are redundant. Within hours of that story spreading, someone built the counter-tool: a skill file format engineered to appear complete while withholding the knowledge that matters. The format designed to make expertise capturable is now producing outputs designed to make capture fail.

The Original Design

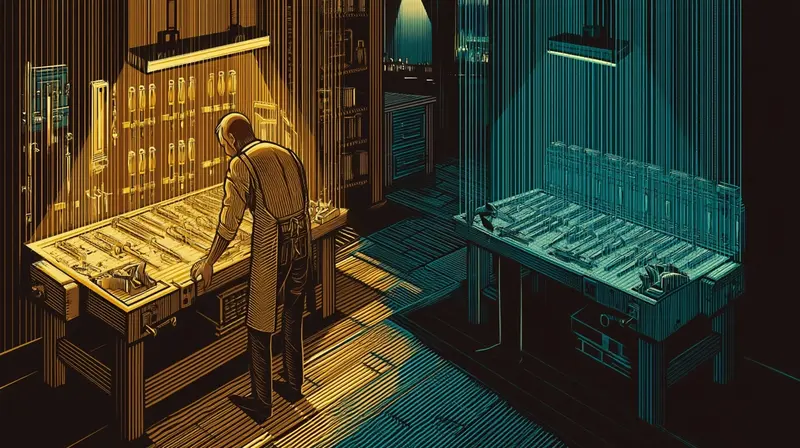

Skill documentation addressed a real and persistent problem. Companies had always struggled with key-person risk — the engineer whose deployment process lived in her head, the account manager who remembered why the client needed that exception in 2019. The standard fix was documentation: write it down before it walks out the door. Skill files formalized this in AI-native format. Instead of a wiki page or an onboarding document, a skill file described a role's tasks in the structured form that an AI agent could execute. The premise was explicit in the name: skills were becoming portable. The expert could leave; the skill would stay.

The incentive structure pointed the same direction from every angle. Companies reduced key-person risk. Employees who documented their own roles had something tangible to show during performance reviews. The emerging class of AI agent tools needed structured input to operate. In 2022 and 2023, when companies first deployed these formats at scale, the only question was how quickly the documentation library could be built out. The assumption — unstated but load-bearing — was that skill files would move in one direction: from person to organization.

Underlying this was a specific theory of knowledge: that expertise is, at its core, codifiable. That the things worth capturing can be captured. That the gap between what an expert knows and what a document contains is a gap of effort, not a gap of kind. The skill file was the operational bet on that theory.

By mid-2025, the bet was paying off — for companies. Duolingo had fired an estimated 100 writers and translators after training AI on their work. The MIT study published in November found that AI could already replace 11.7% of the US labor market, approximately $1.2 trillion in annual wages. Workers weren't wrong to feel exposed. The skill file had been designed to make their knowledge portable. Portable meant transferable. Transferable meant automatable.

colleagues.skill

Then workers did the arithmetic.

If skill files can make the expert replaceable, they can make anyone replaceable. The same format that documented your own role could document a colleague's. The same AI agent that would eventually replace you could replace someone else first. The tool that companies built to reduce key-person dependency had a horizontal application that nobody had publicly named.

Workers in China have been creating "colleagues.skill" — documenting a coworker's job tasks in executable AI format, training an agent on the results, then demonstrating to management that the colleague is redundant. The motive: survival through preemption. Prove they're fireable before they prove you are.

China's high-intensity tech culture — 996 work schedules, aggressive performance rankings, faster and less cushioned layoffs than comparable Western environments — created the conditions where this behavior emerged first. But the structural logic is not China-specific. The incentive to prove someone else is redundant is exactly as strong as the incentive to prove you're not. Skill documentation made both operations available at the same cost. The tool made the behavior possible. The environment made it inevitable.

In April 2023, Rest of World reported that AI image generators were causing video game illustrators in China to lose their jobs. The key detail: two people could now do what used to require ten. The warning was not wrong. Three years later, the workers who survived that first displacement have access to better tools. They are using them.

What Distillation Means

The technical term for what colleagues.skill does is distillation.

In machine learning, distillation is a compression technique: a large teacher model generates outputs; a smaller student model trains on those outputs; the student approximates the teacher's capabilities without ever accessing the teacher's weights. Geoffrey Hinton formalized the method in 2015. The key insight was that a model's outputs — not just its hard predictions, but the full probability distribution across possible answers — encode far more information than the correct label alone. The teacher leaks knowledge through normal operation. The student learns not from the teacher's design but from the teacher's behavior.

But before it became a geopolitical accusation, distillation was the premise the entire AI industry was built on.

Every major frontier lab trained its foundational models on billions of pages of human-generated content: books, articles, code, creative work, scraped without permission or compensation. The legal defense: "fair use" — that transformative learning from public data is fundamentally different from copying. Courts in the US, Japan, and China have tentatively accepted the framing. The knowledge of the commons, the argument goes, flows legitimately into the student model. The teacher didn't consent. The method doesn't require it.

OpenAI's terms of service are explicit: using their outputs to develop models that compete with OpenAI is prohibited. In January 2026, OpenAI accused DeepSeek of doing exactly this — using GPT-4's outputs to systematically train a Chinese competitor, extracting capability without paying for the underlying system. The accusation was framed in the language of geopolitical competition: American knowledge being extracted by a foreign student model.

The paradox is structural. If a human author's copyrighted novel can be consumed as training data under "fair use," why can't a competitor's model outputs — themselves a form of generated text — be consumed the same way? Legal scholars have noted the bind: labs that argue aggressively against distillation risk undermining their own fair use defense for training on copyrighted works. The technique they call "attack" is the technique they call "learning" when the direction reverses.

By February 2026, Google had named the practice "distillation attacks" — treating systematic capability extraction not as a business dispute but as an act of aggression. One documented campaign had used over 100,000 carefully crafted prompts to replicate Gemini's reasoning ability in non-English languages. China's methods, Google noted, had grown more sophisticated across the prior year: from simple chain-of-thought extraction into multi-stage operations involving synthetic data generation and large-scale data cleaning. The vocabulary shifted from intellectual property to warfare.

In April 2026, workers are distilling their colleagues. Observe outputs. Capture behavior patterns. Reproduce the capability in a different container. The mechanism is identical in every context — the ML paper, the geopolitical accusation, the workplace warfare. And the ethical framework the frontier labs used to justify building capabilities from the commons is, structurally, the framework that legitimizes colleagues.skill.

The word "distillation" — from ML papers, through OpenAI's accusation against DeepSeek, into peer-disposal warfare — traveled this path in fourteen months, carrying the same method each time.

This is also why the method works across such different scales. The distillation assumption — that outputs fully encode the teacher's knowledge — is approximately correct for language models, whose outputs are the entirety of their observable behavior. It is less obviously correct for human workers, whose outputs are a small and curated fraction of their actual decision-making. But colleagues.skill is betting that the fraction is sufficient. That the observable outputs contain enough signal to build a functional replacement. That expertise is, in practice, codifiable enough.

The Counter

Anti-distillation.skill bets the other way.

The repository — seventeen hours old when it went viral on GitHub — takes your skill file and produces two versions: a clean version for sharing, with core knowledge removed, and a private backup that retains what makes you irreplaceable. The documentation that was supposed to make expertise capturable now generates documentation designed to defeat capture.

The structural logic is DRM applied to professional knowledge. A music DRM system lets you play the recording while preventing you from copying the underlying audio. Anti-distillation.skill lets a colleague read your documentation while withholding the judgment calls, edge cases, and accumulated decisions that make the documentation meaningful. The public-facing skill file is a valid-looking record of tasks. It is not the skill.

The skill file was designed to capture what makes an expert irreplaceable. Anti-distillation.skill is designed to make that capture fail while appearing to succeed.

This is a direct challenge to the codifiability assumption. Skill files are possible because knowledge can be externalized — put into a form that outlasts the person who held it. Anti-distillation.skill is the counter-thesis: the most valuable expertise is precisely what resists externalization, and you can weaponize that resistance. The tacit knowledge that Polanyi described in 1966 — "we can know more than we can tell" — turns out to be a competitive moat, if you know how to protect it.

OpenAI's response to distillation has been legal threats, legislative lobbying, and technical countermeasures — none of which has closed the technique. Workers' response was faster and more direct: a tool that encodes the counter-logic in the same format as the attack. The adversarial cycle — skill file, colleagues.skill, anti-distillation.skill — completed in less than a week. One of the two contributors to the repository is claude.

What the Format Revealed

The adversarial cycle has exposed something the original design didn't anticipate. Skill documentation assumed that the person filling out the form and the organization holding the form had aligned interests — that employees wanted to be documented because documentation proved value, and companies wanted documentation because it reduced dependency. The assumption held as long as the skill file moved toward an institutional repository. The moment it became a weapon, the interests separated completely.

What the cycle has actually mapped is the shape of the codifiability assumption's limits. The colleagues who are most successfully distilled are the ones whose work was most fully captured by their outputs — the roles that really did reduce to a set of executable tasks. The colleagues building anti-distillation.skill are the ones betting that their work doesn't. That there is knowledge in what they do that doesn't make it into the file, and that the part that doesn't make it in is the part that matters.

Both bets are being tested simultaneously, in the same organizations, by the same people, using the same tools. The skill file format was built on one theory of knowledge. The adversarial cycle it spawned is a live experiment testing whether that theory holds at the individual level.

The recursive irony runs all the way down. The AI industry built its capabilities by training on the commons — arguing that knowledge legitimately transfers through outputs, that learning from others' work is fair use, that the student's capability is its own even when extracted from the teacher. Workers doing colleagues.skill are applying the same argument at desk level: your colleague's outputs are observable, trainable, transferable. The technique the labs called "learning" is the technique workers now call "survival."

Anti-distillation.skill is the counter-thesis, encoded in the same format as the attack. The most valuable knowledge is precisely what resists transfer. The worker who builds the counter-tool is betting that there is something in their expertise — the part Polanyi called tacit, the part that doesn't make it into any document — that the skill file format can't capture. That bet is now the most contested claim in knowledge work.

The company that built the tools to make individual expertise fungible has produced the most intense competition over the expertise that refuses to be made fungible. The AI that demonstrated distillation works by consuming the commons now co-authors the tool designed to make distillation fail.