TEXXR stands for "tech extended release." The premise is simple: news is not disposable. The daily tech press generates hundreds of articles. Each one covers an event. But events are chapters, not stories. The story is the arc — how an entity changed over months, what a pattern of coverage reveals about power shifts, where two companies' trajectories intersected without either noticing. TEXXR is the platform that finds those arcs.

The Corpus

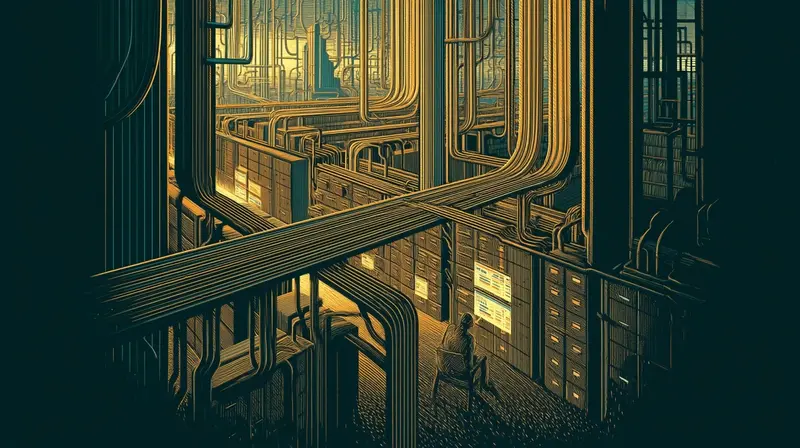

The foundation is a database of over 130,000 articles from 2014 to present, updated daily via automated pipelines. Every article is indexed, searchable, and connected to every other article through three layers of analysis.

This isn't a sample. It's the full record — a decade of tech coverage, structured for longitudinal research. Every funding round, every product launch, every lawsuit, every earnings call. The kind of corpus that lets you ask not just "what happened today" but "how did we get here."

Three Layers

Vector embeddings. Every article is converted into a 1,536-dimensional vector that captures its semantic meaning. This makes it possible to search by concept rather than keyword. A query for "companies building their own AI chips" returns results about Arm's AGI CPU, Google's TPU, Amazon's Trainium, and Meta's MTIA — even when none of those articles use that exact phrase. Two articles that share no words can be recognized as covering the same story. Similarity becomes measurable.

Entity extraction. Natural language processing identifies every company, person, product, and technology in each article. Apple, Tim Cook, iPhone, Core ML — each gets tagged and indexed. This turns unstructured headlines into a searchable knowledge base of who did what, when. It also enables momentum detection: which entities are accelerating in coverage, which are fading, and which just broke pattern.

Knowledge graph. A hyperedge extraction pipeline reads every article and identifies structured relationships: "OpenAI → partnership → Microsoft," "Arm → launch → AGI CPU," "Meta → legal → New Mexico." Over 85,000 of these edges form a knowledge graph that tracks how entities relate to each other — and how those relationships change over time. When Arm spent 35 years as a chip designer and then launched its own competing chip, the graph's regime detection flagged it as the largest identity break in recorded history.

The Editorial Layer

The tools find signals. The editorial posts interpret them. Every post starts with a signal from the data — a z-score spike, a knowledge graph regime change, a semantic drift inflection — and traces the arc that signal reveals.

These aren't news summaries. They're constellations: multiple data points connected into a pattern that no single article makes visible. Some examples of what the tools surface:

- The Architect's Chip — Knowledge graph regime detection flagged Arm's largest identity break in a decade. The 10-year arc from chip designer to chip competitor was visible in the structured data before it was obvious in headlines.

- The Convergence — Edge-arc comparison revealed that OpenAI and Anthropic arrived at identical coverage profiles from opposite starting points. Two companies founded on different principles, shaped by the same market into the same thing.

- The Suboptimal Move — Similar-article search connected a 2026 chess feature to a 2023 Go breakthrough and the 1997 Deep Blue match — a 29-year arc of human-AI competition, surfaced by vector proximity.

What Makes It Different

Most news platforms optimize for recency. TEXXR optimizes for depth. The value isn't today's article — it's the arc across months and years. The tools exist to find what no single article reveals: the slow shifts, the converging trajectories, the moments when an entity's meaning quietly changed.

The data is the differentiator. A decade of coverage, fully embedded, fully extracted, fully graphed. Every query runs against the full corpus. Every pattern is grounded in the actual record — not summaries, not abstractions, not vibes. The articles themselves, structured for analysis.

How to Use It

- Browse. The chronicle shows every article by date, searchable by entity or text. Start with today and work backward.

- Search. /explore runs semantic search across the full corpus. Search by meaning, not keywords.

- Analyze. /arc visualizes topic evolution over quarters. /drift maps how an entity's meaning changes over time. /nexus explores the knowledge graph directly.

- Read. /posts for daily editorial analysis — patterns and arcs surfaced by the tools.

- Build. /pulse for API access. Search, entities, edges, momentum, and more — all programmable.